Last Week in AI #145: DeepMind AI helps discover new math theorems, Timnit Gebru's new research center, Clearview AI warned to stop using UK data, and more!

DeepMind AI proposes new knot theory conjectures, Ex-Googler Timnit Gebru starts her own AI research center, UK regulators warn Clearview AI to stop using illegally obtained face data, and more!

Top News

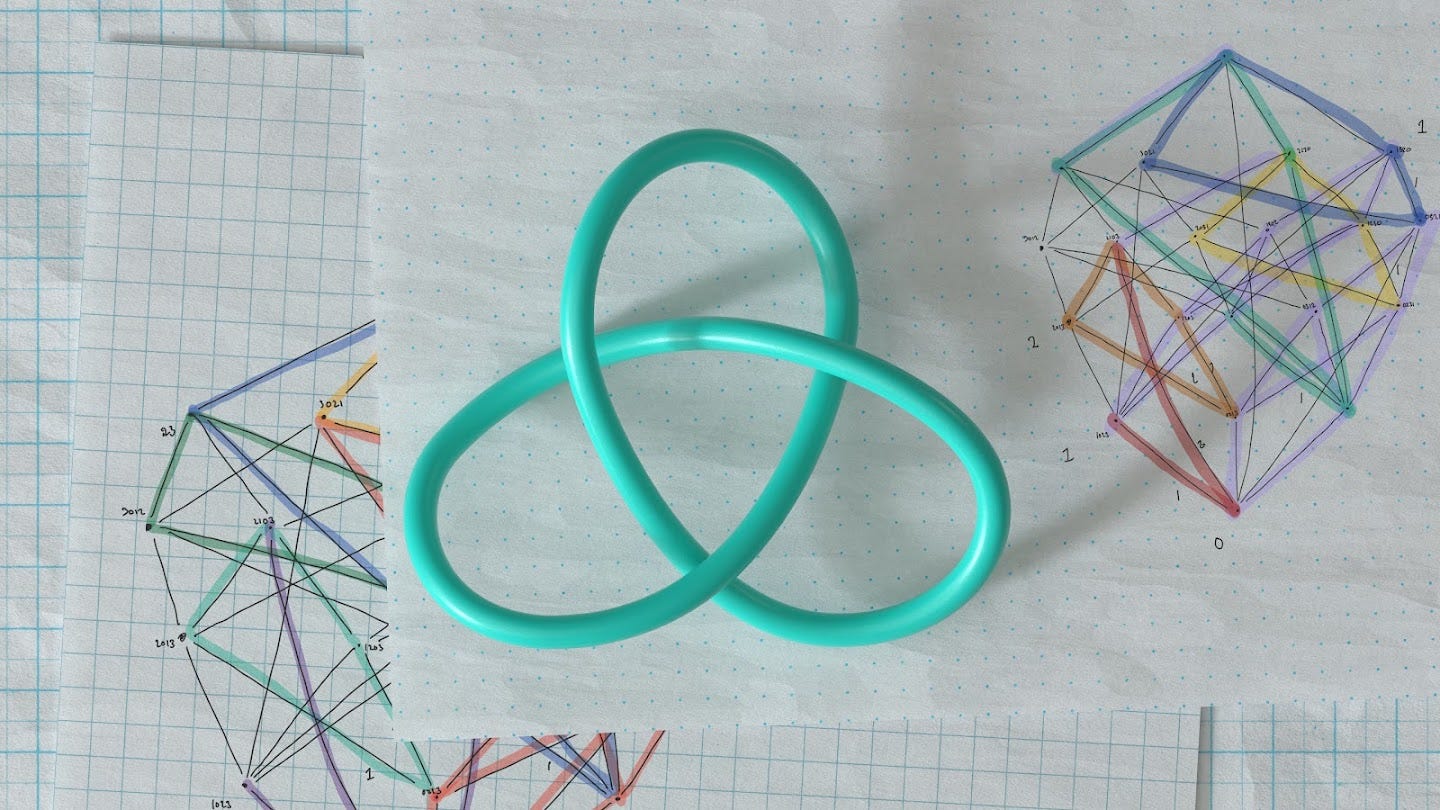

DeepMind AI collaborates with humans on two mathematical breakthroughs

In a recent Nature paper, DeepMind and collaborators demonstrated how to use AI to discover new mathematical theorems. The AI in this case helped to propose potential correlations between the algebraic and geometric theory of knots from a large dataset of knot patterns. Mathem…