Last Week in AI #180: Meta's troubled chat bot, AI in femtech, Science AI's reproducibility crises, and more!

Meta's AI chatbot spews conspiracy theories, how AI-based health apps are applied in femtech, and common AI misuses are causing a reproducibility crisis in the sciences.

Top News

Meta’s AI chatbot says Trump will always be president and repeats anti-Semitic conspiracies

Did Meta not learn anything from Microsoft's infamous chatbot Tay? On August 5, Meta released BlenderBot 3, an AI chatbot, to users in the US. As Meta warned, BlenderBot indeed was "likely to make untrue or offensive statements": it described Mark Zuckerberg as "too creepy and manipulative" to a reporter from Insider and claimed Trump was still president and "always will be" to a Wall Street Journal reporter. Users can flag BlenderBot's inappropriate and offensive responses, and Meta claims it has reduced offensive responses by 90 percent.

Our Take: Color me amused and not surprised. I go back and forth on the utility of open-sourcing chatbots like this. On the one hand, I think opening large language model-based systems is good because it allows people to study them. On the other, they spew crap like this and who knows what's going to happen with it.

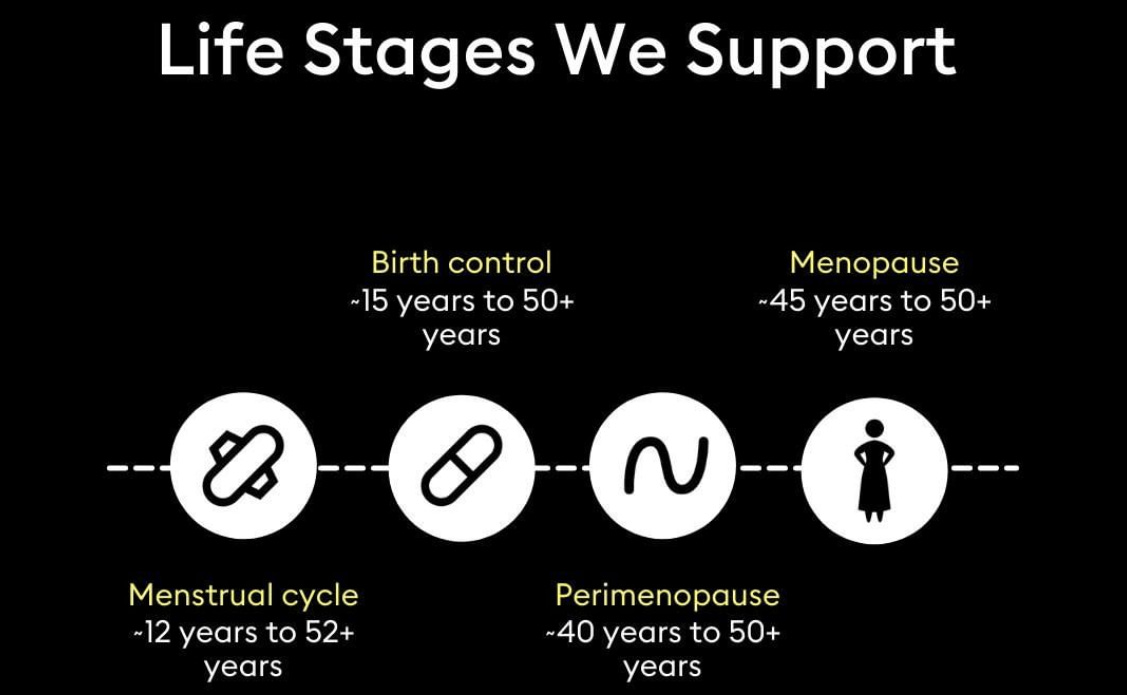

How a femtech app is using A.I. to fill in the gaps for women’s health care

Wild.ai has developed a wearable device that, based on manual user inputs and AI-based tracking, helps women analyze their vitals and performance in order to make training, recovery, and nutritional recommendations specifically adapted to their physiology. The idea stemmed from the founder, Guillaume’s personal needs when she realized that most health and fitness-based applications are based on data collected majorly across the male population. Even the small female population considered in those data samples is not very diverse. Moreover, every woman's body is different, with varying cycles, bleeding patterns, and circumstances. Using the app, a user can report sleep metrics, stress levels, menstrual symptoms, digestion issues, as well as external data drawn from a wearable device. The application then aggregates all this data and provides a checklist of targeted recommendations and predictions of negative symptoms.

Our Take: The application sounds very promising, not just for women's health and fitness but also from a sociological perspective. First, the article highlights the huge gender bias in the existing applications as they are based mostly on research data collected from men and a very small population of women. Second, the article highlights the importance of going beyond a one-size-fits-all solution. Moreover, the application learns and adapts its suggestions based on feedback from humans in the loop to personalize its recommendations for its users. This is a great step in the direction Guillaume envisions, when the need to research women and men separately is no longer a question and when products are also built to tailor to women’s multitude of needs.

Machine Learning Is Causing a ‘Reproducibility Crisis’ in Science

AI-based tools are being used in many scientific research communities, from sociology to medicine. However, not all the scientists using these tools are trained in AI, and improper usage of AI techniques has led to numerous spurious and overclamied results. For example, a study that claims it can use AI models to predict civil wars more than 90 years in advance turned out to have used test data in the model’s training set. In other words, the researchers mistakenly “gave” the answers to the AI model during training - no wonder the prediction accuracies were so high. The good news is that scientists are becoming more aware of such AI misuses as well as the limitations of AI tools. Still, the reproducibility crisis is real and should be addressed urgently.

Our take: Ironically this problem is exacerbated by how easy it is to get started with AI - the barrier of entry is very low, and merely applying AI does not require much expertise. However, as the article notes, it’s impractical to expect all scientists to become experts in AI. It’s crucial that best practices for AI be shared widely and sanity-check tools are developed to prevent the most basic of errors. At the same time, everyone should be weary of studies that use AI and achieve suspiciously accurate prediction results, as some subtle AI pitfalls are bound to make it through to publication despite researchers’ best intentions.

Other News

Research

Hyundai announces $400M AI, robotics institute powered by Boston Dynamics - “This morning, the company announced that the robotics firm will form the foundations of the Boston Dynamics AI Institute, which aims to advance research in artificial intelligence and robotics.”

Johns Hopkins’ heart-scanning AI predicts cardiac arrests up to 10 years ahead - "Researchers at Johns Hopkins University have developed an artificial intelligence approach they say can help predict if and when a person could die of cardiac arrest based on imaging scans of the heart."

In simulation of how water freezes, artificial intelligence breaks the ice - "The resulting simulation describes how water molecules transition into solid ice with quantum accuracy."

Wearable AI Sensor Supports Personalized Health Data Processing, Analysis - "Researchers at the University of Chicago have created a wearable computing chip, which can analyze a person’s health data in real time using artificial intelligence."

AI pilot can navigate crowded airspace - "A team of researchers at Carnegie Mellon University believe they have developed the first AI pilot that enables autonomous aircraft to navigate a crowded airspace."

Applications

Stable Diffusion, the slick generative AI tool, just launched and went live on GitHub - "You’ve almost certainly already seen a torrent of high-quality text-to-image generation pics lately. Now one of the most impressive generative tools has published code and opened up to academic researchers. It’s called Stable Diffusion – and you’ll probably be hearing a lot more soon."

Robot Repeatedly Rearranges Remnants In The Round - "Sisyphus is an art installation by [Kachi Chan] featuring two scales of robots engaged in endless cyclic interaction. Smaller robots build brick arches while a giant robot pushes them down. As [Kachi Chan] says “this robotic system propels a narrative of construction and deconstruction."

AI Can’t Replace Human Creativity. But It Can Enhance It - "The release of Google’s Imagen tool has certainly made my news feed more entertaining in recent months. Who doesn’t want to see pictures of a raccoon dressed as an astronaut or a corgi cycling through Times Square?"

Machine Learning At The Forefront Of Telemental Health - "Michael Stefferson received his PhD in Physics from the University of Colorado before deciding to make the jump into machine learning (ML). He spent the last several years as a Machine Learning Engineer at Manifold, where he first started working on projects in the healthcare industry."

AI-generated digital art spurs debate about news illustrations - "Artificial intelligence has seeped into many creative trades — from urban planning to translations to painting. The latest: visualizations in journalism. Why it matters: Computers are getting better at doing what humans can do, including creating art from scratch."

Business

Xiaomi debuts CyberOne ahead of Tesla’s AI Day - "Xiaomi CEO, Lei Jun announced the company’s CyberOne humanoid robot at its launch event in Beijing on August 11th. The debut is ahead of Tesla’s AI Day which many are anticipating a working Optimus Bot prototype. It will be interesting to see the two robots side by side."

Zillow Launches AI Home Touring Tool Nationwide - "Zillow’s artificial intelligence guided house hunting service is now here nationwide to make your home buying experience a little less painful. "

Yoodli Raises $6M in Seed Funding - "Yoodli, a Seattle, WA – based startup building an artificial intelligence platform to help people communicate effectively, raised $6m in seed funding. "

SenseTime Releases AI Chinese Chess Robot - "SenseTime, an artificial intelligence software company, held a new product launch event and launched its first household consumer artificial intelligence product – “SenseRobot”, an AI Chinese chess-playing robot."

Geek+ raises another $100M for AMRs - "Geek+ has raised $100 million in Series E1 funding. The company’s last funding round closed in early 2021 but was previously undisclosed. Now, the company is valued at over $2 billion. Participating in the Series E funding round were Intel Capital, Vertex Growth, and Qingyue Capital Investment."

North America is seeing a hiring boom in pharmaceutical industry machine learning roles - "North America extended its dominance for machine learning hiring among pharmaceutical industry companies in the three months ending May. The number of roles in North America made up 63.9% of total machine learning jobs – up from 62.6% in the same quarter last year."

DoD cyber and insider threat analysis contract won by Torch.AI - "Artificial intelligence data infrastructure company Torch.AI won a contract from the U.S."

Zephyr AI lands $18.5M seed round for data-driven drug discovery - "Data-driven startup Zephyr AI has raised $18.5 million in seed funding to advance precision medicine and drug discovery with machine learning."

Gartner Identifies Key Emerging Technologies Expanding Immersive Experiences, Accelerating AI Automation and Optimizing Technologist Delivery - "Metaverse, super apps and Web3 are among the core technologies enabling evolved immersive experiences. Cloud sustainability and data observability are helping technologists deliver on emerging business demands."

Google says AI update will improve search result quality in ‘snippets’ - "If you’ve ever Googled something only to be met with a little info box highlighting the top answer, you’ve encountered one of Google’s “featured snippets."

Concerns

Tesla’s self-driving technology fails to detect children in the road, tests find - "A safe-technology advocacy group issued claims Tuesday that Tesla’s full self-driving software represents a potentially lethal threat to child pedestrians, the latest in a series of claims and investigations into the technology to hit the world’s leading electric carmaker."

A Lesson from Google: Can AI Bias be Monitored Internally? - "A star AI researcher was forced out of Google when she raised concerns about bias in the company’s large language models. Now tech companies must rethink their AI ethics."

Analysis

A.I. Is Not Sentient. Why Do People Say It Is? - "Robots can’t think or feel, despite what the researchers who build them want to believe."

Set Sail For Fail? On AI risk - "Existential risk due to artificial intelligence (hereafter AI risk) is worth taking seriously"

Deep Learning Alone Isn’t Getting Us To Human-Like AI - "Artificial intelligence has mostly been focusing on a technique called deep learning. It might be time to reconsider."

Policy

Baidu's robotaxis don't need any human staff in these parts of China - "Chinese tech company Baidu said Monday it has become the first robotaxi operator in China to obtain permits for selling rides with no human driver or staff member inside the vehicles."

Facial recognition smartwatches to be used to monitor foreign offenders in UK - "Migrants who have been convicted of a criminal offence will be required to scan their faces up to five times a day using smartwatches installed with facial recognition technology under plans from the Home Office and the Ministry of Justice."

Fun

I replaced all our blog thumbnails using DALL·E 2 for $45: here’s what I learned - "Blog posts with images get 2.3x more engagement. "

Copyright © 2022 Skynet Today, All rights reserved.