Last Week in AI #338 - Anthropic sues Trump, xAI starting over, Iran AI Fakes

Anthropic sues Trump administration in AI dispute with Pentagon, ‘Not built right the first time’ — Musk’s xAI is starting over again, again, Cascade of A.I. Fakes About War With Iran Causes Chaos Onl

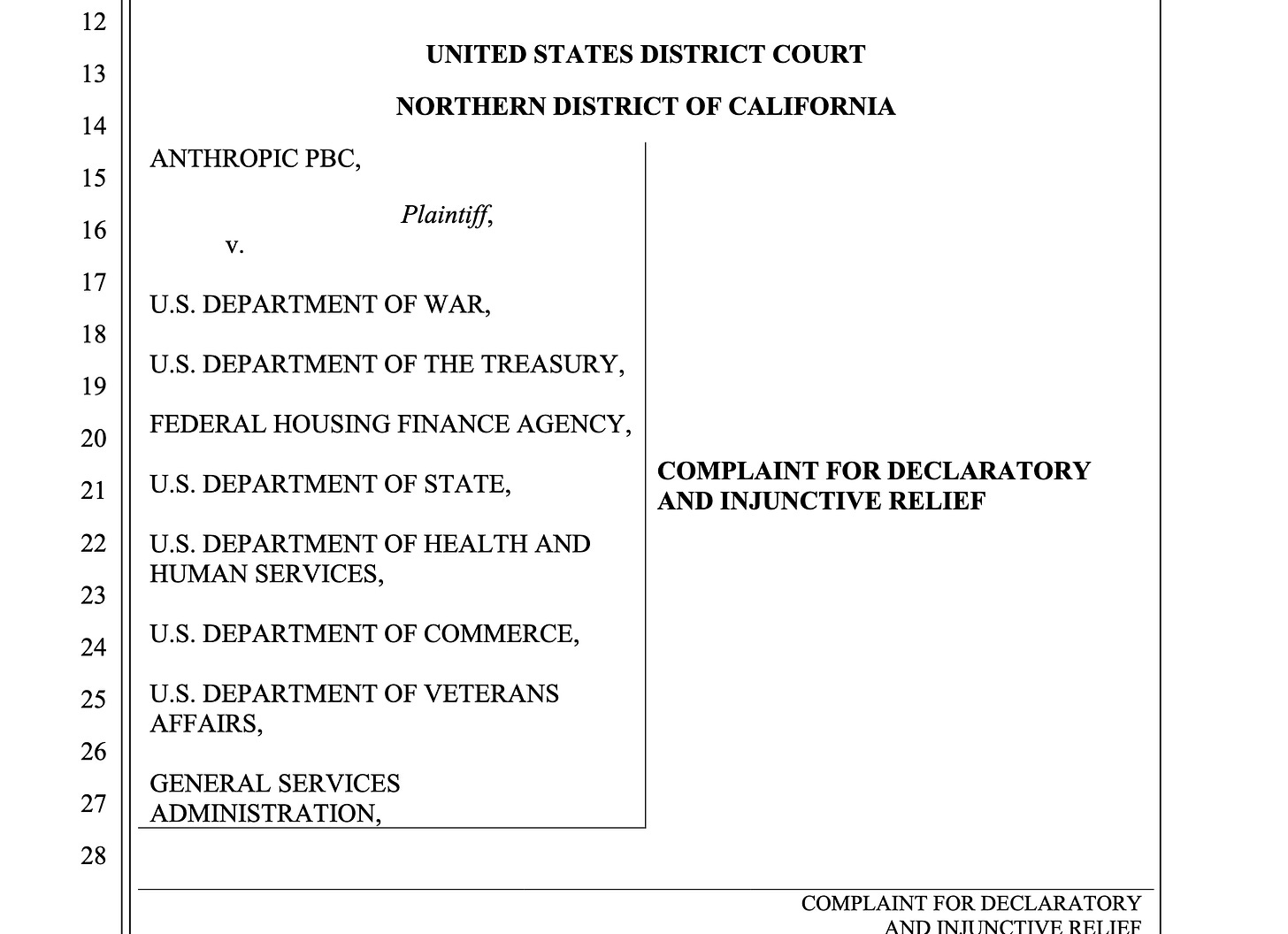

Anthropic sues Trump administration in AI dispute with Pentagon

Related:

OpenAI and Google Workers File Amicus Brief in Support of Anthropic Against the US Government

Internal Pentagon memo orders military commanders to remove Anthropic AI technology from key systems

Summary: Anthropic filed two lawsuits—one in the Northern District of California and …