The Messy History of Facial Recognition Company Clearview AI

Clearview AI, a provider of facial recognition capabilities powered by billions of images scraped from all over the web, has faced much media scrutiny and numerous legal challenges in 2020 and 2021

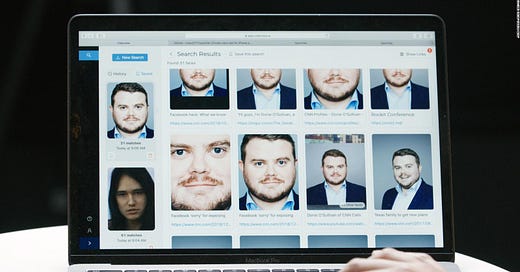

As we’ve found in our analysis of AI news in 2021, few if any topics receive as much attention in AI coverage as facial recognition, and few companies have as many news stories devoted to them as Clearview AI – whose chief product is a ‘search engine for faces,’ or the ability to find someone’s name from a photo of their face. By scraping publicly avail…

Keep reading with a 7-day free trial

Subscribe to Last Week in AI to keep reading this post and get 7 days of free access to the full post archives.